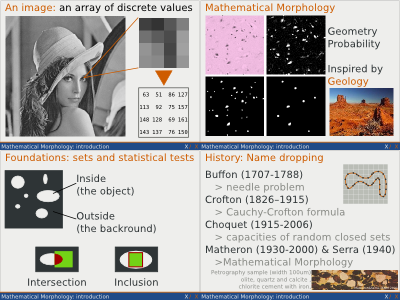

We will start by enumerating a few interesting properties

of the geometric covariogram presented in previous sessions,

and see how it can help in analyzing the patterns present in

an image.

Most of these properties will of course be old news with

those familiar with the covariance function, since the

geometric covariogram is closely related to it.

Most of the following properties have been studied for

specific mathematical objects called RACS (RAndom Closed

Sets). RACS is a mathematical model that allows to describe

real-world materials and textures whose constitution doesn't

obey any simple equation (contrary to what would be the case

for periodical tilings for instance).

We won't describe RACS in further details, but this concept

is important enough to be pointed at here. However, in our

illustration we will consider the realisation of a specific

RACS which we arbitrarily build as a set of small rectangles

with no large scale order, but where the rectangles are

gathers in medium sized clusters, each of those clusters being

made of 4 rectangles each at the corner of an invisible

rectangle, so that the clusters themselves have a

rectangle-like "shape" (more specifically they have a

rectangular convex hull).

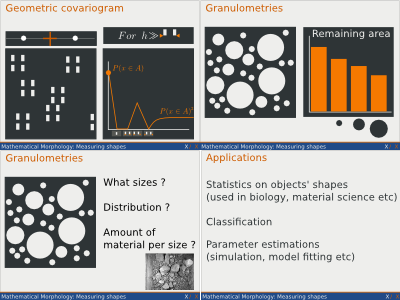

Let's now compute the geometric covariogram with two

horizontally aligned points, for the image we've just

described.

The geometric covariogram depends on h, the distance

between the two points that we take as structuring

elements.

And for h=0 the value of the geometric covariogram is quite

simply the probability for a single point to fall on the white

surface, which here is equal to the total area of all the

small rectangles that made up the clusters.

If h grows up to the width of the small rectangles, the

probability of inclusion of our two points will decrease.

And if the separation between the small rectangles is big

enough, this probability will be 0 when h is just bigger than

the rectangles' width.

The probability will stick to zero until h is big enough to

allow for one of the points to be in a rectangle while the

other is in another rectangle.

On our specific images, this happens when h if of the width

separating two rectangles of a same cluster.

The probability will then raise from 0. And for our

specific image, it will find a maximum when h is equal to the

sum of the width of a rectangle and the width separating two

rectangles, because then the bi-point used as a probe by the

geometric covariogram will fit in two rectangles at a

time.

With a little bigger h, this is not true anymore an the

bi-point will find it less an less places where it can

fit.

Still in our specific image, the probability will decrease

and eventually reach 0 when h is equal to the sum of the width

separating two small rectangles of the same cluster and twice

the width of those rectangles.

If h continues to grow, interesting things happens.

First of all, from the point of view of the measurement

practitioner, it is crucial to remember that the measurements

are made on an image whose size, and thus whose contained

information, is finite.

Trying to investigate patterns whose typical size is of the

same order as the image's size is a sure way to measure

nonsense because you simply won't have enough representative

data. In this matter, Shannon's sampling theorem is still a

solid safeguard and when measuring the geometric covariogram on

an image, you should consider with much suspicion (and even

better not consider at all) whatever value you get for h

greater or equal than the half of the image's width.

However let's assume of a moment that our illustration

image covers a much bigger domain that what is actually does

on the slides (and thus assuming that we can see much more

clusters of those white rectangles).

Then we would find out that the geometric covariogram

increases and eventually reaches a ceiling. This ceiling would

typically be equal to the square of the first point of the

covariogram (ie the square of the probability for a single

point to fall inside a white rectangle), typically because for

h big enough with respect to the patterns we described in our

image, there is no dependence anymore between rectangles over

this long distance, and thus the fact that one of the point

falls in the rectangle doesn't tell us anything about whether

the other point will also fall in a rectangle or not. The

distance where this phenomena appears is usually called the

"range".

Before having a look at another kind of measurement, it is

important to note that all the observations we made to explain

the step by step construction of the geometric covariogram's

curve, are in practice reversed: you will typically measure

the covariogram and look in the image to match the remarkable

points of the curve with patterns of corresponding size.

As we saw, the geometric covariogram is a powerful tool to

investigate the scales of the various patterns present in an

image. Its curves features are related to the scales along a

given direction, and if we measures the covariogram in all

direction, we could somehow investigate a little more that the

scales but also get some clues about shapes.

We will now talk about another kind of tools that gives us

a direct measure of shapes' preponderance in an image.

This tool, called the granulometries is inspired by

measurement that are typically made by mining companies to

analyze the size of extracted rocks and the amount of material

(e.g. mass) for each size population (e.g. for for

calibration).

Granulometries have the added benefit to have a rather

intuitive interpretation as a mathematical modeling of

sieves !

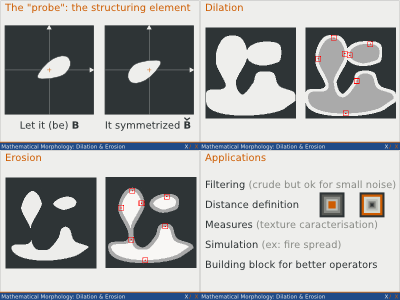

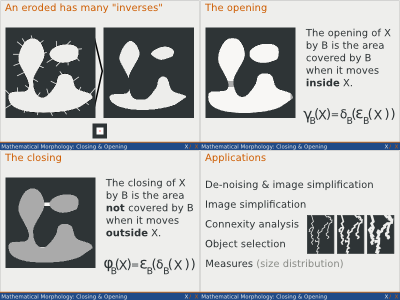

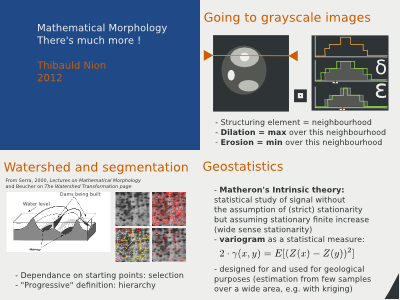

While the geometric covariogram was built upon the erosion

operation, the granulometries are based on the opening

operations.

To measure the granulometry of an image, one must first

chose the shape of the structuring element to be used.

Then the opening of the original image is computed by the

structuring element, and the surface of the remaining white

area is measured.

After that another structuring element is considered,

typically with the same shape as the previous one but bigger

(e.g. an homothetic of the initial s.e.), the opening of the

original image is performed and the remaining white area is

measured to get a second point of the granulometry.

The process continues with bigger and bigger structuring

elements, to build the full curve of the granulometry.

By the property of the opening this process removes the

objects that are smaller that the s.e. being used while

keeping a fair part (depending on the object and the s.e.'s

shapes) of the bigger objects.

To a certain extent what remains in the image after an

opening by a given s.e. is what would remain in a sieve if the

image's objects were filtered through it and if this sieve's

holes had the shape of the structuring element.

The granulometric curve for a black and white image shows

the number of pixels that are still painted white after each

opening step.

It must be interpreted with care since this is a number of

pixels and not the number of objects itself and there is

usually no simple a priori relation between the number of

objects (each possibility having any shape) and the number of

white pixels.

Interestingly it is often easier to "visualize" the presence

of different shapes through the derivative of the

granulometry, that, quite logically, shows the amount of

material removed after each opening.

In the literature we find several uses for the

granulometries, as shape signatures for simple objects like

disks, as classifiers for more complex objects like

handwritten characters, and even as signature for a full

document's image where the granulometry synthesizes information

about the sizes of the letters, words, lines, paragraphs and

paragraph clusters.

Throughout this session and the previous one we saw several

important morphological measures: the erosion and dilation

curve, the geometric curve as a specific case of erosion curve

and also as a bridge to more classical digital signal

processing technique and the granulometries.

Each time it was made clear that these measures helped in

getting numbers related to the objects' shape.

In practice, they are indeed useful either to estimate some

patterns and objects' sizes, typically in biology, material

science and physics in general.

As we saw they are also used for image classification.

And last but not least, they are of great use for people

who models random media (like materials or biological

tissue) to help estimate a model's parameters or its

validity.